|

In digitalmusic processing technology, quantization is the studio-software process of transforming performed musical notes, which may have some imprecision due to expressive performance, to an underlying musical representation that eliminates the imprecision. The process results in notes being set on beats and on exact fractions of beats.[1]

The purpose of quantization in music processing is to provide a more beat-accurate timing of sounds.[2] Quantization is frequently applied to a record of MIDI notes created by the use of a musical keyboard or drum machine. Additionally, the phrase 'pitch quantization' can refer to pitch correction used in audio production, such as using Auto-Tune.

Description[edit]

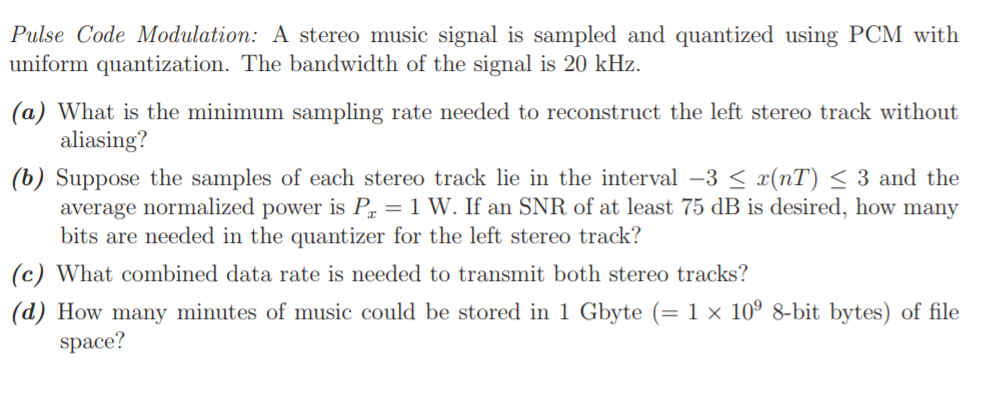

A frequent application of quantization in this context lies within MIDI application software or hardware. MIDI sequencers typically include quantization in their manifest of edit commands. In this case, the dimensions of this timing grid are set beforehand. When one instructs the music application to quantize a certain group of MIDI notes in a song, the program moves each note to the closest point on the timing grid. Quantization in MIDI is usually applied to Note On messages and sometimes Note Off messages; some digital audio workstations shift the entire note by moving both messages together. Sometimes quantization is applied in terms of a percentage, to partially align the notes to a certain beat. Using a percentage of quantization allows for the subtle preservation of some natural human timing nuances.

The most difficult problem in quantization is determining which rhythmic fluctuations are imprecise or expressive (and should be removed by the quantization process) and which should be represented in the output score. For instance, a simple children's song should probably have very coarse quantization, resulting in few different notes in output. On the other hand, quantizing a performance of a piano piece by Arnold Schoenberg, for instance, should result in many smaller notes, tuplets, etc.

In recent years audio quantization has come into play, with the plug-in Beat Detective on all versions of Pro Tools being used regularly on modern-day records to tighten the playing of drums, guitar, bass, etc.[3]

See also[edit]References[edit]

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Quantization_(music)&oldid=898424174'

Quantization is the process of constraining an input from a continuous or otherwise large set of values (such as the real numbers) to a discrete set (such as the integers). The terms quantization and discretization are often denotatively synonymous but not always connotatively interchangeable.

Signal processing[edit]

Physics[edit]

Linguistics[edit]Similar terms[edit]

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Quantization&oldid=854213368'

The most common tool used to generate MIDI messages is an electronic keyboard. These messages may be routed to a digital synthesizer inside the keyboard, or they may be patched to some other MIDI instrument, like your computer.

When a key is pressed the keyboard creates a 'note on' message. This message consists of two pieces of information: which key was pressed (called 'note') and how fast it was pressed (called 'velocity'). 'Note' describes the pitch of the pressed key with a value between 0 and 127. I've copied the table in fig 2 from NYU's website, it lists all the MIDI notes and their standard musical notation equivalents. You can see that MIDI note 60 is middle C (C4). 'Velocity' is a number between 0 and 127 that is usually used to describe the volume (gain) of a MIDI note (higher velocity = louder). Sometimes different velocities also create different timbres in an instrument; for example, a MIDI flute may sound more frictional at a higher velocity (as if someone was blowing into it strongly), and more sinusoidal/cleaner sounding at lower velocities. Higher velocity may also shorten the attack of a MIDI instrument. Attack is a measurement of how long it takes for a sound to go from zero to maximum loudness. For example, a violin playing quick, staccato notes has a must faster attack than longer, sustained notes. something to remember- not all keyboards are velocity sensitive, if you hear no difference in the sound produced by a keyboard no matter how hard you hit the keys, then you are not sending variable velocity information from that instrument. Computer keyboards are not velocity sensitive, if you are using your computer's keys to play notes into a software sequencer, all the notes will have the same velocity. When a key is released the keyboard creates another MIDI message, a 'note off' message. These messages also contain 'note' information to ensure that it is signalling the end of the right MIDI note. This way if you are pressing two keys at once and release once of them, the note off message will not signal the end of both notes, only the one you've released. Sometimes note off messages will also contain velocity information based on how quickly you've released the key. This may tell a MIDI instrument something about how quickly it should dampen the note. Figure 1 shows how these MIDI messages are typically represented in MIDI sequencing software environments (in this case GarageBand). Each of the notes in the sequence are started by a note on message and ended with a note off message. In GarageBand the velocity attached to the note on message is represented by the color of the note. In this image above the high velocity notes are white and the lower velocity notes are grey. Figs 3 and 4 show MIDI notes recorded in Ableton. Again you can see that the velocity associated with the note on message is represented by the color of the MIDI note- more saturated = higher velocity. Also notice that the velocity is indicated by a line with a circle on top on the bottom of the screen. By selecting one of your MIDI notes you can see the velocity associated with it; in fig 4 the D4 note has a velocity of 57.

The simplest way to quantize a signal is to choose the digital amplitude value closest to the original analog amplitude. This example shows the original analog signal (green), the quantized signal (black dots), the signal reconstructed from the quantized signal (yellow) and the difference between the original signal and the reconstructed signal (red). The difference between the original signal and the reconstructed signal is the quantization error and, in this simple quantization scheme, is a deterministic function of the input signal.

Quantization, in mathematics and digital signal processing, is the process of mapping input values from a large set (often a continuous set) to output values in a (countable) smaller set, often with a finite number of elements. Rounding and truncation are typical examples of quantization processes. Quantization is involved to some degree in nearly all digital signal processing, as the process of representing a signal in digital form ordinarily involves rounding. Quantization also forms the core of essentially all lossy compression algorithms.

The difference between an input value and its quantized value (such as round-off error) is referred to as quantization error. A device or algorithmic function that performs quantization is called a quantizer. An analog-to-digital converter is an example of a quantizer.

Mathematical properties[edit]

Because quantization is a many-to-few mapping, it is an inherently non-linear and irreversible process (i.e., because the same output value is shared by multiple input values, it is impossible, in general, to recover the exact input value when given only the output value).

The set of possible input values may be infinitely large, and may possibly be continuous and therefore uncountable (such as the set of all real numbers, or all real numbers within some limited range). The set of possible output values may be finite or countably infinite.[1] The input and output sets involved in quantization can be defined in a rather general way. For example, vector quantization is the application of quantization to multi-dimensional (vector-valued) input data.[2]

Basic types of quantization[edit]

2-bit resolution with four levels of quantization compared to analog.[3]

3-bit resolution with eight levels.

Analog-to-digital converter[edit]

An analog-to-digital converter (ADC) can be modeled as two processes: sampling and quantization. Sampling converts a time-varying voltage signal into a discrete-time signal, a sequence of real numbers. Quantization replaces each real number with an approximation from a finite set of discrete values. Most commonly, these discrete values are represented as fixed-point words. Though any number of quantization levels is possible, common word-lengths are 8-bit (256 levels), 16-bit (65,536 levels) and 24-bit (16.8 million levels). Quantizing a sequence of numbers produces a sequence of quantization errors which is sometimes modeled as an additive random signal called quantization noise because of its stochastic behavior. The more levels a quantizer uses, the lower is its quantization noise power.

Rate–distortion optimization[edit]

Rate–distortion optimized quantization is encountered in source coding for lossy data compression algorithms, where the purpose is to manage distortion within the limits of the bit rate supported by a communication channel or storage medium. The analysis of quantization in this context involves studying the amount of data (typically measured in digits or bits or bit rate) that is used to represent the output of the quantizer, and studying the loss of precision that is introduced by the quantization process (which is referred to as the distortion).

Rounding example[edit]

As an example, rounding a real numberx{displaystyle x} to the nearest integer value forms a very basic type of quantizer – a uniform one. A typical (mid-tread) uniform quantizer with a quantization step size equal to some value Δ{displaystyle Delta } can be expressed as

What is rundll error in windows 10. Hi Ernest, that particular error is caused by Logitech Download Assistant, there is a know incompatibility with Windows 10, your best option is to remove the updater from Windows 10 startup. Press Ctrl + Shift + Esc to open Task Manager. Click on the Startup Tab. See if Logitech Download Assistant is listed there. After a recent Windows 10 automatic update, I receive a Run DLL error message at startup that states 'There was a problem starting C:Program. The error message does not specify which program is the problem. It's curious this error message just appeared after Microsoft sent the.

where the notation ⌊⌋{displaystyle lfloor rfloor } or floorâ¡(){displaystyle operatorname {floor} ( )} depicts the floor function.

The essential property of a quantizer is that it has a countable set of possible output values that has fewer members than the set of possible input values. The members of the set of output values may have integer, rational, or real values. For simple rounding to the nearest integer, the step size Δ{displaystyle Delta } is equal to 1. With Δ=1{displaystyle Delta =1} or with Δ{displaystyle Delta } equal to any other integer value, this quantizer has real-valued inputs and integer-valued outputs.

When the quantization step size (Δ) is small relative to the variation in the signal being quantized, it is relatively simple to show that the mean squared error produced by such a rounding operation will be approximately Δ2/12{displaystyle Delta ^{2}/12}.[4][5][6][7][8][1] Mean squared error is also called the quantization noise power. Adding one bit to the quantizer halves the value of Δ, which reduces the noise power by the factor ¼. In terms of decibels, the noise power change is 10â‹…log10â¡(14)≈−6dB.{displaystyle scriptstyle 10cdot log _{10}left({tfrac {1}{4}}right) approx -6 mathrm {dB} .}

Because the set of possible output values of a quantizer is countable, any quantizer can be decomposed into two distinct stages, which can be referred to as the classification stage (or forward quantization stage) and the reconstruction stage (or inverse quantization stage), where the classification stage maps the input value to an integer quantization indexk{displaystyle k} and the reconstruction stage maps the index k{displaystyle k} to the reconstruction valueyk{displaystyle y_{k}} that is the output approximation of the input value. For the example uniform quantizer described above, the forward quantization stage can be expressed as

and the reconstruction stage for this example quantizer is simply

This decomposition is useful for the design and analysis of quantization behavior, and it illustrates how the quantized data can be communicated over a communication channel – a source encoder can perform the forward quantization stage and send the index information through a communication channel, and a decoder can perform the reconstruction stage to produce the output approximation of the original input data. In general, the forward quantization stage may use any function that maps the input data to the integer space of the quantization index data, and the inverse quantization stage can conceptually (or literally) be a table look-up operation to map each quantization index to a corresponding reconstruction value. This two-stage decomposition applies equally well to vector as well as scalar quantizers.

Mid-riser and mid-tread uniform quantizers[edit]

Most uniform quantizers for signed input data can be classified as being of one of two types: mid-riser and mid-tread. The terminology is based on what happens in the region around the value 0, and uses the analogy of viewing the input-output function of the quantizer as a stairway. Mid-tread quantizers have a zero-valued reconstruction level (corresponding to a tread of a stairway), while mid-riser quantizers have a zero-valued classification threshold (corresponding to a riser of a stairway).[9]

Mid-tread quantization involves rounding. The formulas for mid-tread uniform quantization are provided in the previous section.

Mid-riser quantization involves truncation. The input-output formula for a mid-riser uniform quantizer is given by:

where the classification rule is given by

and the reconstruction rule is

Note that mid-riser uniform quantizers do not have a zero output value – their minimum output magnitude is half the step size. In contrast, mid-tread quantizers do have a zero output level. For some applications, having a zero output signal representation may be a necessity.

In general, a mid-riser or mid-tread quantizer may not actually be a uniform quantizer – i.e., the size of the quantizer's classification intervals may not all be the same, or the spacing between its possible output values may not all be the same. The distinguishing characteristic of a mid-riser quantizer is that it has a classification threshold value that is exactly zero, and the distinguishing characteristic of a mid-tread quantizer is that is it has a reconstruction value that is exactly zero.[9]

Dead-zone quantizers[edit]

A dead-zone quantizer is a type of mid-tread quantizer with symmetric behavior around 0. The region around the zero output value of such a quantizer is referred to as the dead zone or deadband. The dead zone can sometimes serve the same purpose as a noise gate or squelch function. Especially for compression applications, the dead-zone may be given a different width than that for the other steps. For an otherwise-uniform quantizer, the dead-zone width can be set to any value w{displaystyle w} by using the forward quantization rule[10][11][12]

where the function sgn{displaystyle operatorname {sgn} }( ) is the sign function (also known as the signum function). The general reconstruction rule for such a dead-zone quantizer is given by

where rk{displaystyle r_{k}} is a reconstruction offset value in the range of 0 to 1 as a fraction of the step size. Ordinarily, 0≤rk≤12{displaystyle 0leq r_{k}leq {tfrac {1}{2}}} when quantizing input data with a typical pdf that is symmetric around zero and reaches its peak value at zero (such as a Gaussian, Laplacian, or generalized Gaussian pdf). Although rk{displaystyle r_{k}} may depend on k{displaystyle k} in general, and can be chosen to fulfill the optimality condition described below, it is often simply set to a constant, such as 12{displaystyle {tfrac {1}{2}}}. (Note that in this definition, y0=0{displaystyle y_{0}=0} due to the definition of the sgn{displaystyle operatorname {sgn} }( ) function, so r0{displaystyle r_{0}} has no effect.)

A very commonly used special case (e.g., the scheme typically used in financial accounting and elementary mathematics) is to set w=Δ{displaystyle w=Delta } and rk=12{displaystyle r_{k}={tfrac {1}{2}}} for all k{displaystyle k}. In this case, the dead-zone quantizer is also a uniform quantizer, since the central dead-zone of this quantizer has the same width as all of its other steps, and all of its reconstruction values are equally spaced as well.

Granular distortion and overload distortion[edit]

Often the design of a quantizer involves supporting only a limited range of possible output values and performing clipping to limit the output to this range whenever the input exceeds the supported range. The error introduced by this clipping is referred to as overload distortion. Within the extreme limits of the supported range, the amount of spacing between the selectable output values of a quantizer is referred to as its granularity, and the error introduced by this spacing is referred to as granular distortion. It is common for the design of a quantizer to involve determining the proper balance between granular distortion and overload distortion. For a given supported number of possible output values, reducing the average granular distortion may involve increasing the average overload distortion, and vice versa. A technique for controlling the amplitude of the signal (or, equivalently, the quantization step size Δ{displaystyle Delta }) to achieve the appropriate balance is the use of automatic gain control (AGC). However, in some quantizer designs, the concepts of granular error and overload error may not apply (e.g., for a quantizer with a limited range of input data or with a countably infinite set of selectable output values).[1]

The additive noise model for quantization error[edit]

A common assumption for the analysis of quantization error is that it affects a signal processing system in a similar manner to that of additive white noise – having negligible correlation with the signal and an approximately flat power spectral density.[5][1][13][14] The additive noise model is commonly used for the analysis of quantization error effects in digital filtering systems, and it can be very useful in such analysis. Your using outdated licence file ragnarok 2. It has been shown to be a valid model in cases of high resolution quantization (small Δ{displaystyle Delta } relative to the signal strength) with smooth probability density functions.[5][15]

Additive noise behavior is not always a valid assumption. Quantization error (for quantizers defined as described here) is deterministically related to the signal and not entirely independent of it. Thus, periodic signals can create periodic quantization noise. And in some cases it can even cause limit cycles to appear in digital signal processing systems. One way to ensure effective independence of the quantization error from the source signal is to perform dithered quantization (sometimes with noise shaping), which involves adding random (or pseudo-random) noise to the signal prior to quantization.[1][14]

Quantization error models[edit]

In the typical case, the original signal is much larger than one least significant bit (LSB). When this is the case, the quantization error is not significantly correlated with the signal, and has an approximately uniform distribution. In the rounding case, the quantization error has a mean of zero and the RMS value is the standard deviation of this distribution, given by 112LSB≈0.289LSB{displaystyle scriptstyle {frac {1}{sqrt {12}}}mathrm {LSB} approx 0.289,mathrm {LSB} }. In the truncation case the error has a non-zero mean of 12LSB{displaystyle scriptstyle {frac {1}{2}}mathrm {LSB} } and the RMS value is 13LSB{displaystyle scriptstyle {frac {1}{sqrt {3}}}mathrm {LSB} }. In either case, the standard deviation, as a percentage of the full signal range, changes by a factor of 2 for each 1-bit change in the number of quantizer bits. The potential signal-to-quantization-noise power ratio therefore changes by 4, or 10â‹…log10â¡(4)=6.02{displaystyle scriptstyle 10cdot log _{10}(4) = 6.02}decibels per bit.

At lower amplitudes the quantization error becomes dependent on the input signal, resulting in distortion. This distortion is created after the anti-aliasing filter, and if these distortions are above 1/2 the sample rate they will alias back into the band of interest. In order to make the quantization error independent of the input signal, noise with an amplitude of 2 least significant bits is added to the signal. This slightly reduces signal to noise ratio, but, ideally, completely eliminates the distortion. It is known as dither.

Quantization noise model[edit]

Quantization noise for a 2-bit ADC operating at infinite sample rate. The difference between the blue and red signals in the upper graph is the quantization error, which is 'added' to the quantized signal and is the source of noise.

Comparison of quantizing a sinusoid to 64 levels (6 bits) and 256 levels (8 bits). The additive noise created by 6-bit quantization is 12 dB greater than the noise created by 8-bit quantization. When the spectral distribution is flat, as in this example, the 12 dB difference manifests as a measurable difference in the noise floors.

Quantization noise is a model of quantization error introduced by quantization in the analog-to-digital conversion (ADC) intelecommunication systems and signal processing. It is a rounding error between the analog input voltage to the ADC and the output digitized value. The noise is non-linear and signal-dependent. It can be modelled in several different ways.

In an ideal analog-to-digital converter, where the quantization error is uniformly distributed between −1/2 LSB and +1/2 LSB, and the signal has a uniform distribution covering all quantization levels, the Signal-to-quantization-noise ratio (SQNR) can be calculated from

Where Q is the number of quantization bits.

The most common test signals that fulfill this are full amplitude triangle waves and sawtooth waves.

For example, a 16-bit ADC has a maximum signal-to-noise ratio of 6.02 × 16 = 96.3 dB.

When the input signal is a full-amplitude sine wave the distribution of the signal is no longer uniform, and the corresponding equation is instead

Here, the quantization noise is once again assumed to be uniformly distributed. When the input signal has a high amplitude and a wide frequency spectrum this is the case.[16] In this case a 16-bit ADC has a maximum signal-to-noise ratio of 98.09 dB. The 1.761 difference in signal-to-noise only occurs due to the signal being a full-scale sine wave instead of a triangle/sawtooth.

Quantization noise power can be derived from

where δv{displaystyle delta mathrm {v} } is the voltage of the level.

(Typical real-life values are worse than this theoretical minimum, due to the addition of dither to reduce the objectionable effects of quantization, and to imperfections of the ADC circuitry. Also see noise shaping.)

For complex signals in high-resolution ADCs this is an accurate model. For low-resolution ADCs, low-level signals in high-resolution ADCs, and for simple waveforms the quantization noise is not uniformly distributed, making this model inaccurate.[17] In these cases the quantization noise distribution is strongly affected by the exact amplitude of the signal.

The calculations above, however, assume a completely filled input channel. If this is not the case - if the input signal is small - the relative quantization distortion can be very large. To circumvent this issue, analog compressors and expanders can be used, but these introduce large amounts of distortion as well, especially if the compressor does not match the expander. The application of such compressors and expanders is also known as companding.

Rate–distortion quantizer design[edit]

A scalar quantizer, which performs a quantization operation, can ordinarily be decomposed into two stages:

These two stages together comprise the mathematical operation of y=Q(x){displaystyle y=Q(x)}.

Entropy coding techniques can be applied to communicate the quantization indices from a source encoder that performs the classification stage to a decoder that performs the reconstruction stage. One way to do this is to associate each quantization index k{displaystyle k} with a binary codeword ck{displaystyle c_{k}}. An important consideration is the number of bits used for each codeword, denoted here by length(ck){displaystyle mathrm {length} (c_{k})}.

As a result, the design of an M{displaystyle M}-level quantizer and an associated set of codewords for communicating its index values requires finding the values of {bk}k=1M−1{displaystyle {b_{k}}_{k=1}^{M-1}}, {ck}k=1M{displaystyle {c_{k}}_{k=1}^{M}} and {yk}k=1M{displaystyle {y_{k}}_{k=1}^{M}} which optimally satisfy a selected set of design constraints such as the bit rateR{displaystyle R} and distortionD{displaystyle D}.

Assuming that an information source S{displaystyle S} produces random variables X{displaystyle X} with an associated probability density functionf(x){displaystyle f(x)}, the probability pk{displaystyle p_{k}} that the random variable falls within a particular quantization interval Ik{displaystyle I_{k}} is given by

The resulting bit rate R{displaystyle R}, in units of average bits per quantized value, for this quantizer can be derived as follows:

If it is assumed that distortion is measured by mean squared error, the distortion D, is given by:

Note that other distortion measures can also be considered, although mean squared error is a popular one.

A key observation is that rate R{displaystyle R} depends on the decision boundaries {bk}k=1M−1{displaystyle {b_{k}}_{k=1}^{M-1}} and the codeword lengths {length(ck)}k=1M{displaystyle {mathrm {length} (c_{k})}_{k=1}^{M}}, whereas the distortion D{displaystyle D} depends on the decision boundaries {bk}k=1M−1{displaystyle {b_{k}}_{k=1}^{M-1}} and the reconstruction levels {yk}k=1M{displaystyle {y_{k}}_{k=1}^{M}}.

After defining these two performance metrics for the quantizer, a typical Rate–Distortion formulation for a quantizer design problem can be expressed in one of two ways:

Often the solution to these problems can be equivalently (or approximately) expressed and solved by converting the formulation to the unconstrained problem min{D+λ⋅R}{displaystyle min left{D+lambda cdot Rright}} where the Lagrange multiplierλ{displaystyle lambda } is a non-negative constant that establishes the appropriate balance between rate and distortion. Solving the unconstrained problem is equivalent to finding a point on the convex hull of the family of solutions to an equivalent constrained formulation of the problem. However, finding a solution – especially a closed-form solution – to any of these three problem formulations can be difficult. Solutions that do not require multi-dimensional iterative optimization techniques have been published for only three probability distribution functions: the uniform,[18]exponential,[12] and Laplacian[12] distributions. Iterative optimization approaches can be used to find solutions in other cases.[1][19][20]

Note that the reconstruction values {yk}k=1M{displaystyle {y_{k}}_{k=1}^{M}} affect only the distortion – they do not affect the bit rate – and that each individual yk{displaystyle y_{k}} makes a separate contribution dk{displaystyle d_{k}} to the total distortion as shown below:

where

This observation can be used to ease the analysis – given the set of {bk}k=1M−1{displaystyle {b_{k}}_{k=1}^{M-1}} values, the value of each yk{displaystyle y_{k}} can be optimized separately to minimize its contribution to the distortion D{displaystyle D}.

For the mean-square error distortion criterion, it can be easily shown that the optimal set of reconstruction values {yk∗}k=1M{displaystyle {y_{k}^{*}}_{k=1}^{M}} is given by setting the reconstruction value yk{displaystyle y_{k}} within each interval Ik{displaystyle I_{k}} to the conditional expected value (also referred to as the centroid) within the interval, as given by:

The use of sufficiently well-designed entropy coding techniques can result in the use of a bit rate that is close to the true information content of the indices {k}k=1M{displaystyle {k}_{k=1}^{M}}, such that effectively

and therefore

The use of this approximation can allow the entropy coding design problem to be separated from the design of the quantizer itself. Modern entropy coding techniques such as arithmetic coding can achieve bit rates that are very close to the true entropy of a source, given a set of known (or adaptively estimated) probabilities {pk}k=1M{displaystyle {p_{k}}_{k=1}^{M}}.

In some designs, rather than optimizing for a particular number of classification regions M{displaystyle M}, the quantizer design problem may include optimization of the value of M{displaystyle M} as well. For some probabilistic source models, the best performance may be achieved when M{displaystyle M} approaches infinity.

Neglecting the entropy constraint: Lloyd–Max quantization[edit]

In the above formulation, if the bit rate constraint is neglected by setting λ{displaystyle lambda } equal to 0, or equivalently if it is assumed that a fixed-length code (FLC) will be used to represent the quantized data instead of a variable-length code (or some other entropy coding technology such as arithmetic coding that is better than an FLC in the rate–distortion sense), the optimization problem reduces to minimization of distortion D{displaystyle D} alone.

The indices produced by an M{displaystyle M}-level quantizer can be coded using a fixed-length code using R=⌈log2â¡M⌉{displaystyle R=lceil log _{2}Mrceil } bits/symbol. For example, when M={displaystyle M=}256 levels, the FLC bit rate R{displaystyle R} is 8 bits/symbol. For this reason, such a quantizer has sometimes been called an 8-bit quantizer. However using an FLC eliminates the compression improvement that can be obtained by use of better entropy coding.

Assuming an FLC with M{displaystyle M} levels, the Rate–Distortion minimization problem can be reduced to distortion minimization alone.The reduced problem can be stated as follows: given a source X{displaystyle X} with pdff(x){displaystyle f(x)} and the constraint that the quantizer must use only M{displaystyle M} classification regions, find the decision boundaries {bk}k=1M−1{displaystyle {b_{k}}_{k=1}^{M-1}} and reconstruction levels {yk}k=1M{displaystyle {y_{k}}_{k=1}^{M}} to minimize the resulting distortion

Finding an optimal solution to the above problem results in a quantizer sometimes called a MMSQE (minimum mean-square quantization error) solution, and the resulting pdf-optimized (non-uniform) quantizer is referred to as a Lloyd–Max quantizer, named after two people who independently developed iterative methods[1][21][22] to solve the two sets of simultaneous equations resulting from ∂D/∂bk=0{displaystyle {partial D/partial b_{k}}=0} and ∂D/∂yk=0{displaystyle {partial D/partial y_{k}}=0}, as follows:

which places each threshold at the midpoint between each pair of reconstruction values, and

which places each reconstruction value at the centroid (conditional expected value) of its associated classification interval.

Lloyd's Method I algorithm, originally described in 1957, can be generalized in a straightforward way for application to vector data. This generalization results in the Linde–Buzo–Gray (LBG) or k-means classifier optimization methods. Moreover, the technique can be further generalized in a straightforward way to also include an entropy constraint for vector data.[23]

Uniform quantization and the 6 dB/bit approximation[edit]

The Lloyd–Max quantizer is actually a uniform quantizer when the input pdf is uniformly distributed over the range [y1−Δ/2,yM+Δ/2){displaystyle [y_{1}-Delta /2,~y_{M}+Delta /2)}. However, for a source that does not have a uniform distribution, the minimum-distortion quantizer may not be a uniform quantizer.

The analysis of a uniform quantizer applied to a uniformly distributed source can be summarized in what follows:

A symmetric source X can be modelled with f(x)=12Xmax{displaystyle f(x)={tfrac {1}{2X_{max }}}}, for x∈[−Xmax,Xmax]{displaystyle xin [-X_{max },X_{max }]} and 0 elsewhere.The step size Δ=2XmaxM{displaystyle Delta ={tfrac {2X_{max }}{M}}} and the signal to quantization noise ratio (SQNR) of the quantizer is

For a fixed-length code using N{displaystyle N} bits, M=2N{displaystyle M=2^{N}}, resulting inSQNR=20log10â¡2N=Nâ‹…(20log10â¡2)=Nâ‹…6.0206dB{displaystyle {rm {SQNR}}=20log _{10}{2^{N}}=Ncdot (20log _{10}2)=Ncdot 6.0206,{rm {dB}}},

or approximately 6 dB per bit. For example, for N{displaystyle N}=8 bits, M{displaystyle M}=256 levels and SQNR = 8*6 = 48 dB; and for N{displaystyle N}=16 bits, M{displaystyle M}=65536 and SQNR = 16*6 = 96 dB. The property of 6 dB improvement in SQNR for each extra bit used in quantization is a well-known figure of merit. However, it must be used with care: this derivation is only for a uniform quantizer applied to a uniform source.

For other source pdfs and other quantizer designs, the SQNR may be somewhat different from that predicted by 6 dB/bit, depending on the type of pdf, the type of source, the type of quantizer, and the bit rate range of operation.

However, it is common to assume that for many sources, the slope of a quantizer SQNR function can be approximated as 6 dB/bit when operating at a sufficiently high bit rate. At asymptotically high bit rates, cutting the step size in half increases the bit rate by approximately 1 bit per sample (because 1 bit is needed to indicate whether the value is in the left or right half of the prior double-sized interval) and reduces the mean squared error by a factor of 4 (i.e., 6 dB) based on the Δ2/12{displaystyle Delta ^{2}/12} approximation.

At asymptotically high bit rates, the 6 dB/bit approximation is supported for many source pdfs by rigorous theoretical analysis.[5][6][8][1] Moreover, the structure of the optimal scalar quantizer (in the rate–distortion sense) approaches that of a uniform quantizer under these conditions.[8][1]

Other fields[edit]

Many physical quantities are actually quantized by physical entities. Examples of fields where this limitation applies include electronics (due to electrons), optics (due to photons), biology (due to DNA), physics (due to Planck limits) and chemistry (due to molecules). This limitation is sometimes known in these fields as the 'quantum noise limit'.

See also[edit]

Notes[edit]

References[edit]Quantizing Software

What Is Quantization In Music VideosExternal links[edit]

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Quantization_(signal_processing)&oldid=898476304'

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed